Wireless VR Glove: Minimalist Controller

/In the 2013 adaptation of Ender's Game, Harrison Ford's character slips a metallic device over his hand to control gravity in the training room. This scene inspired me as I've been trying to imagine VR controllers that can be used alongside mouse+keyboards. The controller used by Ford seemed convenient to put on, offer a lot of finger freedom, and probably allow for throwing VR objects without falling off. The design also immediately made me think of the eventual conclusion of the Valve controller prototypes...

Initial thoughts

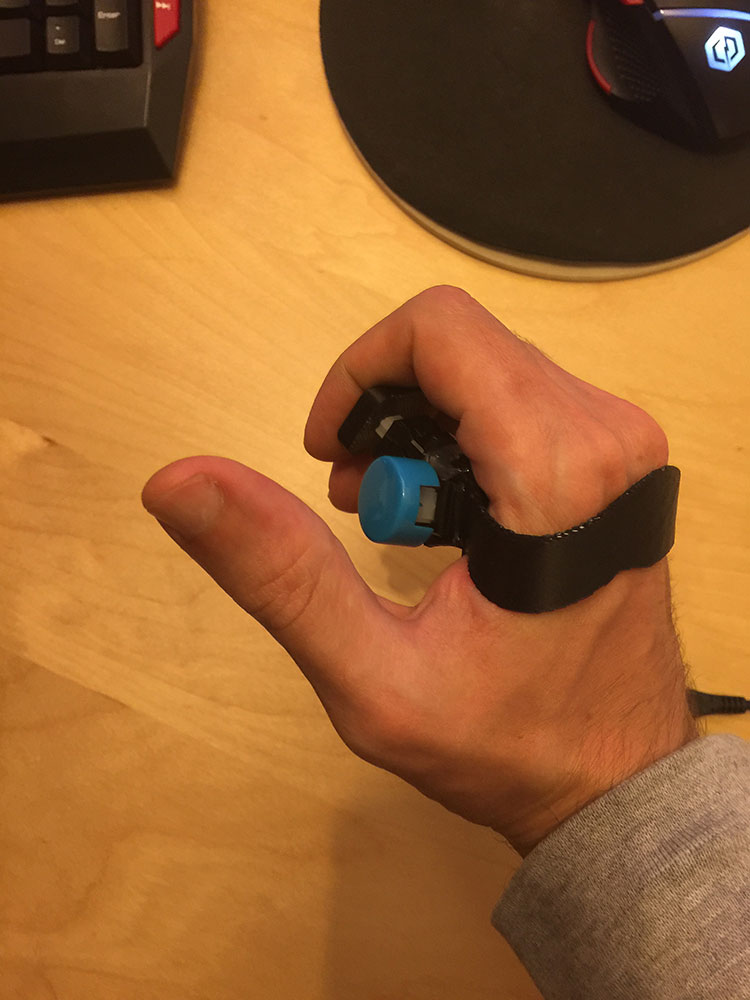

The first 3D test prints work for me since I used my own hand measurements, but it should be simple enough to change and reprint for more hand shapes. This is only a mockup, but placing the battery and electronics will be split between the palm and knuckles.

This "hand-clamp" shape is much easier to put-on than the Fitbit bracelet I previously built. I still plan to use rings or thumb buttons, so this shortens the wires too. Plus, it doesn't get in the way when using a keyboard or a mouse. Everything so far seems really promising.

I'm looking forward to cramming a wifi chip and battery inside :D

Proof of concept

I decided to simplify the requirement list while still figuring out what exactly this controller does. I'm shelving adding wireless functionality in favor of spending more time with the ergonomics. An Arduino Pro Micro fits with the USB cable neatly out of the way. Furthermore, the Leap calibration doesn't seem too bothered by the 3D printed parts, which appear white in IR light.

Arcade buttons were taken apart and hot-glued together. Feels ok and can still use keyboard with controller on, but not comfortably yet. The trigger button is too far forward. It's a delicate balance between being a comfortable button and getting in the way of typing on the keyboard.

First working prototype

The Leap motion does a really good job when it can see the hand, but certain gestures that occlude the hand cause jitter and tracking loss. Moreover, titling a fist away from your face (like aiming a pistol up and down) is nearly invisible to the Leap's infrared cameras. A bno055 sensor provides rotational data while the Leap Motion does positional tracking:

I've been watching a lot of Westworld lately, so making a cowboy inspired demo was most motivating. The wild west revolver asset is free from the Unity store, and it's ultra bad-ass to hold. I can't wait to animate it with the buttons. With this combination of video positioning and sensor orientation, the tracking is really good. It's too late in the evening to solve the lower than normal fps (75-85), but I'm sure the cryptic warning being spit out by the serial communication code is to blame. Once we're back at 90fps the objects should rotate in sync with leap hands.

Optimizing frame rate

I didn't find one optimization that brought the frame rate to a constant 90fps, but several together seemed to do the trick. The one I spent the most time on was installing a USB 3 expansion card. My motherboard only has 2 PCI express slots and the graphics card takes two, so I mounted the graphics card outside to make room. Four unique 3D printed parts fasten the graphics card to glued layers of MDF wood that act like spacers. The MDF is bolted to the case's original side panels. I'm optimistic about the temperatures. More photos about the build here

Ultimately, this didn't seem to make much of a difference. The original thought was the Leap Motion + DK2 running over USB 2 was a bottleneck, but switching to USB 3 didn't offer a big boost. However, at least now the Oculus software no longer says my system is not supported.

I rewrote the Arduino firmware code cleanly and minimally, stripping out the code that waits for a 2 byte request before sending out the orientation data. Instead the Arduino sends at steady 60fps and the Unity serial port uses the latest data and tweens in between (there were race conditions and timeout errors when trying to send the data at 90fps).

Unity runs the serial port in a separate thread that waits for updates at a consistent sample rate. This works fantastic to produce very smooth orientation of an object in space while in VR, since the render thread isn't blocked by the serial port waiting for data. I'm surprised that 60Hz sampling rate produces such good results since I thought VR required 90fps; I guess only the displays need data at 90fps. However, that proved to still not produce perfect motion because while the orientation was silky smooth, moving the object's position caused sharp ghosting effects. Stranger still this was the positional data came from the Leap Motion, which is rendering the hands much smoother.

The final solution was changing the textures to use lower contrast colors. Turns out the low-persistence displays in the Oculus DK2 suffer a side-effect that's made much worse by high contrast colors.

The demo now runs at a satisfying 90-95fps:

Conclusions

- Much improved object manipulation by fusing button sensors, orientation, and Leap Motion data (while preserving finger tracking)

- With infinite funds, the obvious next step would be implementing Valve's Steam VR Tracking for incrementally better solution

- Cost and setup of a Lighthouse solution isn't always practical, so it's good to know adequate tracking can be done with mounted cameras and IMUs

- Although finger tracking evokes good VR presence, the inconsistent nature of gesture recognition makes it a compromise for holding a positional tracker with accurate triggers/buttons